Multimodal Image Refinement

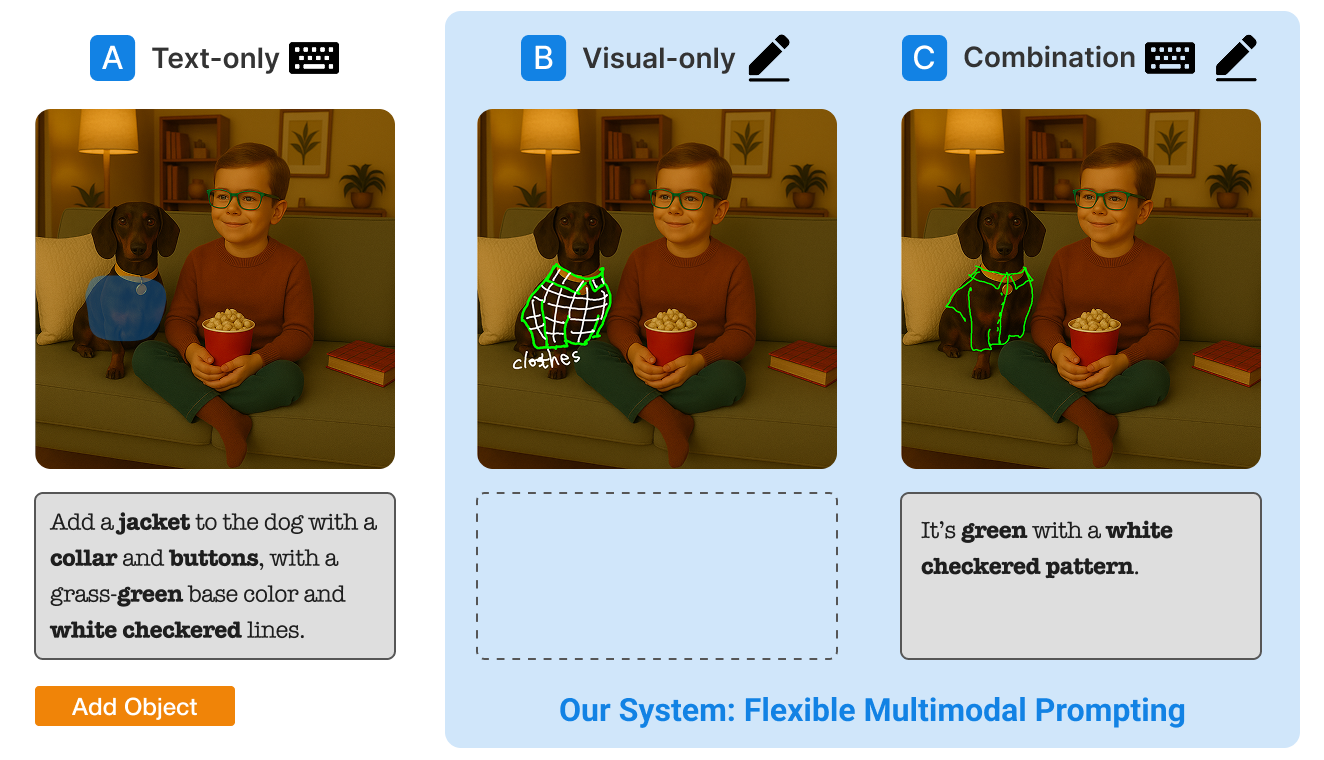

Designing and evaluating a multimodal interaction model for refining AI-generated images using text, sketches, and annotations. This project explores how users express intent and control AI outputs beyond text prompting.

Portfolio

Case studies in UX research, interaction design, and prototyping for AI systems, multimodal interfaces, and immersive environments.

Interaction design across human–AI systems, generative tools, and XR.

Designing and evaluating a multimodal interaction model for refining AI-generated images using text, sketches, and annotations. This project explores how users express intent and control AI outputs beyond text prompting.

Designing an immersive AI workflow for generating and applying textures directly in VR environments. The project reduces context switching and supports real-time exploration of AI-generated outputs in 3D.

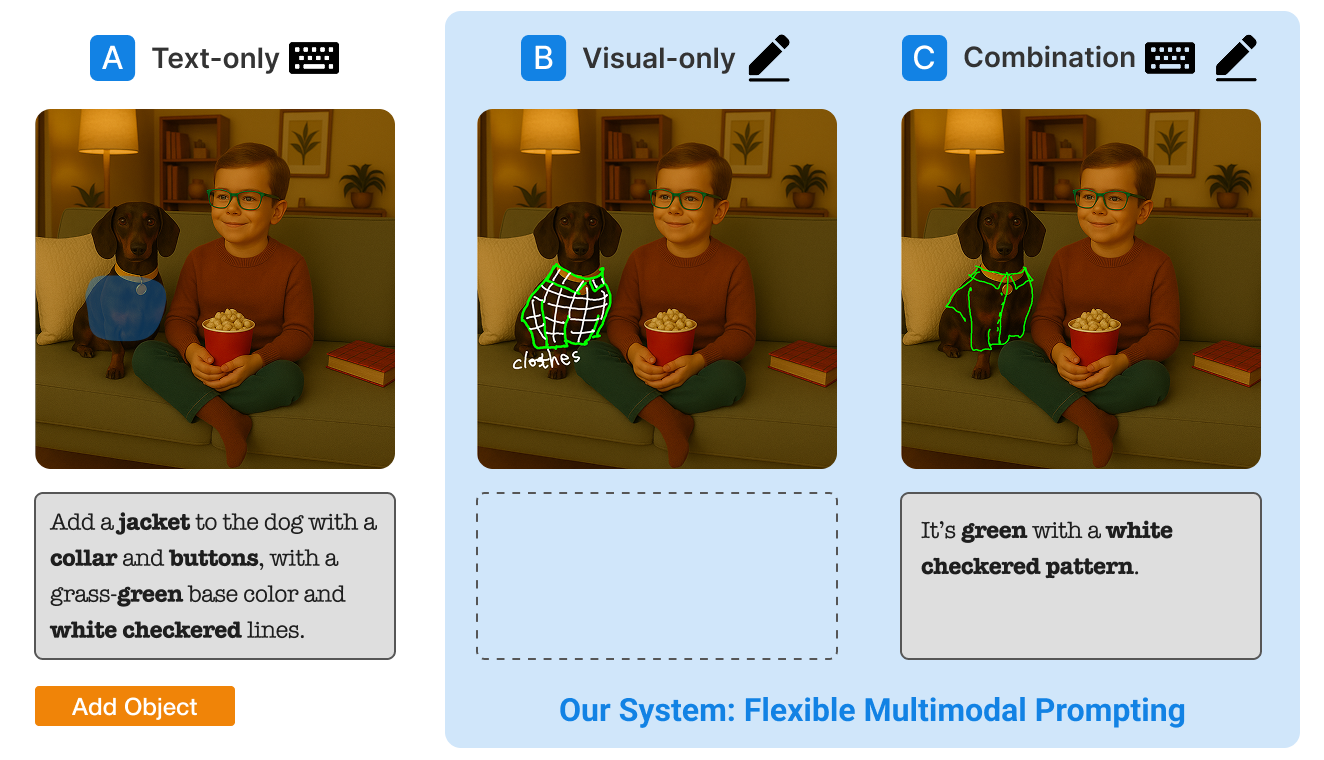

Investigating how professional designers use AI tools in practice and identifying design opportunities for more intuitive, controllable workflows.

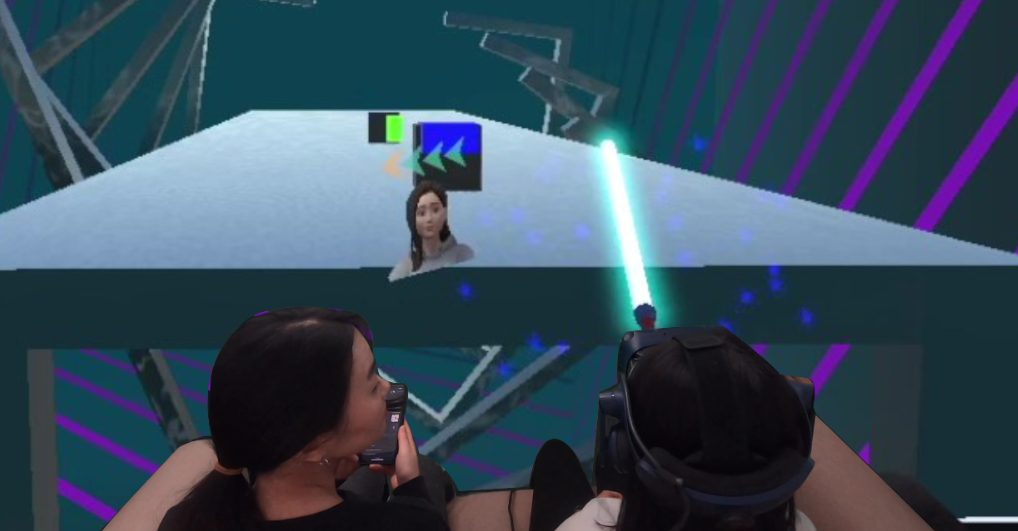

Designing subtle awareness cues that help users notice nearby people in VR without breaking immersion. The project balances attention, interruption, and presence through controlled user studies.